How Long Do Teeth Survive After Root Canal?

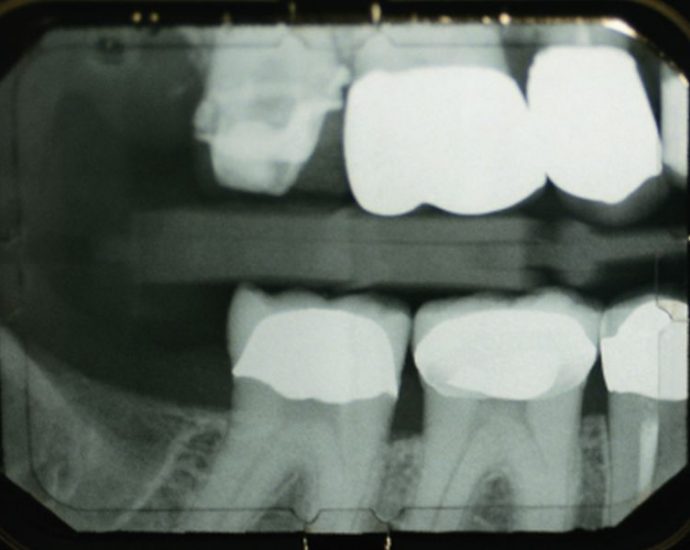

FRIDAY, May 20, 2022 (HealthDay News) — If you’ve had a root canal, you can expect your tooth to survive for about 11 years, researchers say. For a time, root canals can maintain teeth affected by cavities or other problems, but the tooth eventually becomes brittle and dies. To learnContinue Reading